Bill Simmons and Malcolm Gladwell were an hour into another one of their freewheeling and entertaining discussions on Simmons’ podcast when Gladwell made a provocative claim: “I have yet to be convinced that there is any great predictability to player selection in any of the professional leagues,” he said. “It is ultimately a roll of the dice. Half the guys — not all of them — at least half of the people who are called wizards of talent evaluation are not wizards of talent evaluation. They got lucky.”

Whether you’re an NBA front office employee or a fan of the draft who pays a lot of attention to the breakdowns and profiles that proliferate this time of year, this probably sounds jarring. NBA teams are spending vast sums of money every season in an attempt to properly evaluate players; and in this day and age, with almost every game broadcast on TV and statistics readily accessible, fans and media members can form in-depth, well-reasoned opinions about prospects. Can this all be a giant exercise in futility? Can what Gladwell says really be true?

Ultimately I don’t agree with Gladwell — he goes too far with his claim. But following his line of thinking draws us along a path that can help us see the draft from a new perspective.

The True Fools

Darko Milicic. Hasheem Thabeet. Adam Morrison. Kwame Brown. In the minds of NBA fans their names are synonymous with failure — by the players to live up to the high expectations of a top 3 pick, and by the GMs that drafted them to not identify their clear flaws. This, of course, is far from a complete list of high pick busts: in the 10 drafts from 2003 to 20121, about 30% of top 10 picks did not even turn into high level backups, let alone regular starters. In many ways, the history of the draft is a history of highly flawed predictions.

The 2011 draft is a perfect example of this. The top 5 picks produced one All-Star (Kyrie Irving) and some solid players (Enes Kanter, Tristan Thompson, and Jonas Valanciunas). But the rest of the draft contained four multi-time All-Stars: the 11th pick was Klay Thompson, the 15th Kawhi Leonard, the 30th Jimmy Butler and the 60th Isaiah Thomas. That is a pretty big indictment of NBA talent evaluators. How could so many teams miss so badly on these players?

That 2011 draft is an outlier. Most are not quite this extreme, but they still follow the general pattern. The previous draft, in 2010, saw Evan Turner, Derrick Favors and Wesley Johnson all go in the top 5, while Gordon Hayward and Paul George slipped to 9 and 10. And in the following draft, 2012, Michael Kidd-Gilchrist (2) and Thomas Robinson (5) went in the top 5, while Damian Lillard (6), Andre Drummond (9) and Draymond Green (35) all went later.

The draft is inherently unpredictable. Attempting to forecast the futures of athletes in their late teens and early 20s with a high degree of accuracy is an enormously difficult exercise. You don’t have to study the history of the draft for long to come to that conclusion.

So take the breathless declarations of upside, the spotlighting of theoretically immutable flaws, and the day-after draft grades for what they are — entertainment — and remember: anyone who goes on the record about the draft will look foolish in the long run. The true fools, though, are those that don’t have the humility to acknowledge that.

Wizards of Talent Evaluation?

But, one might say, aren’t those mistakes just evidence that the people who made them didn’t know what they were doing? That if they were truly good at their jobs they would have avoided these clear blunders? Unfortunately we’ll never really know the answer to that. A variety of factors conspire to make it almost impossible to judge the ability of talent evaluators from the outside.

The biggest impediment is that with draft decisions the samples are small and the time horizons long. Let’s say there’s one team who really has an eye for talent, and they have a 70% chance each draft of getting their first round pick right (however you define “right”), while another team is very poor at scouting the draft, and only has a 30% chance of getting their first rounder correct each year. Even over the course of three drafts, the clueless front office still has a 1 in 4 chance of matching or exceeding the performance of the expert front office! There just aren’t enough drafts for the typical decision maker to build up a sample of work that would mitigate the role of luck.

One example of this kind of luck: a team can get a draft pick correct, but for the wrong reasons. It was reported that, as GM of the Atlanta Hawks in 2007, Billy Knight wanted to select Al Horford (at least in part) because of the size of Horford’s butt. Horford, of course, has turned into a very good selection. But if that pick was made for a reason that seems tangential (if not completely unrelated) to Horford’s success, then that doesn’t make the person who selected him a “wizard”.2

Similarly, one could argue that talent evaluators shouldn’t get any special credit for taking a player in the range most people expected them to go in, no matter how that player turned out. Draymond Green, for example, was taken 35th in the 2012 draft. But this was around the range most teams would have taken him — if Golden State didn’t take him at 35 then some other team likely would have shortly thereafter, and that team would be lauded. Green’s selection, then, might have been less about great talent evaluation and more simply being in the right place at the right time. Bob Myers, the Warriors’ GM, has even said as much, acknowledging that if the Warriors knew Green would have been this good they wouldn’t have passed on him at #7 and again at #30: “We kind of blew it. But at least we got him.”3

And all of this assumes we can even identify what the correct pick actually is. Usually the quality of those decisions only becomes clear long after the selection is made (and sometimes not even then). A fun exercise for those playing along at home: each year, go back to the previous few drafts and perform a re-draft, ranking the players in order of how they would be picked if the draft were performed again given what everyone knows about them now. You’ll find that even years out your re-draft will change each time you do it, as players make unexpected leaps in performance and others can’t maintain their production or never realize their potential.

But even if we waited patiently for decision makers to build up a real draft record and players to fully develop, it would still be a challenge to assess their decisions. As NBA writer Derek Bodner has often noted, without seeing each team’s full draft board we actually can’t judge the quality of their decisions, since teams are at the whim of who selects before them. If a player they evaluate incorrectly is taken ahead of them and a player they get right slips to them, it will seem like that front office is much better than the one that picked before them — even though they got lucky to avoid a misstep. Imagine, for example, that the Oklahoma City Thunder (who picked 3rd) had the same draft board as Memphis (who picked 2nd) in 2009, ranking Hasheem Thabeet ahead of James Harden. Whichever team had the #2 pick in that draft would end up coming out looking terrible, even though their evaluations were identical.

Put it all together, and good luck telling which GMs are truly wizards of talent evaluation, and which are merely Oz behind the curtain.

But We Do Know Something

The history of the draft is full of blunders and talent evaluators can’t easily be evaluated — that’s a lot of support for Gladwell’s claim that “there is no great predictability to player selection”. If he’s right, then the current approach to drafting is the same as a GM putting all of the names of potential draftees into a hat, squeezing their eyes shut and fishing out a slip of paper. Except with millions of dollars spent along the way convincing people that there is a strategy to how they picked out that specific slip of paper.

But, just as history shows the mistakes of the draft, it also shows the successes. While imperfect, there is an order to the selections made. Earlier we saw that in a recent 10 year period, 30% of top 10 picks ended up not even being high level backups. This is certainly not evidence in favor of accurate talent evaluation. Yet if we shift our gaze to the next 10 picks, 11 through 20, we find that 60% of them ended up not making it as high level backups. This continues through the rest of the draft: for picks 21-40 that number is 75%, and for picks 41-60 it’s close to 90%.

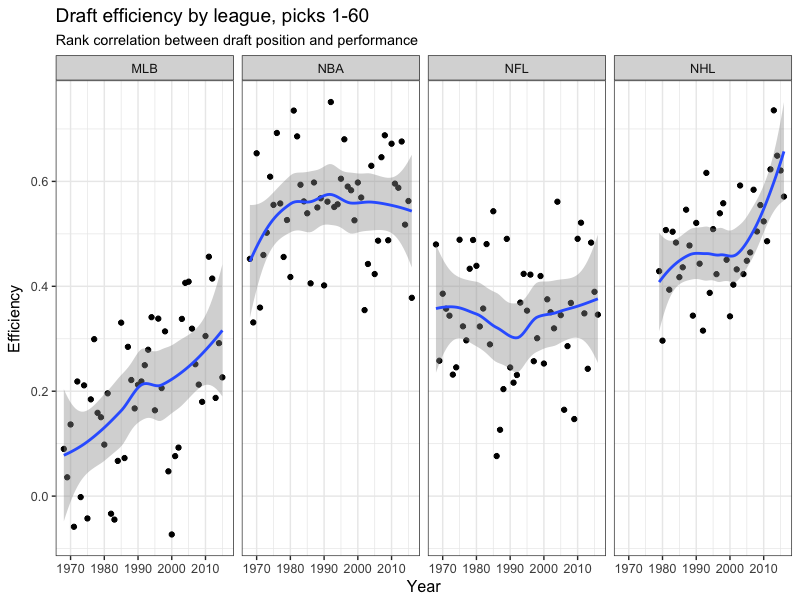

If talent evaluation was worthless we wouldn’t see this. Stars and busts would be spread out mostly evenly through the draft. Instead, though, there is a reasonable correlation between pick number and player performance. Michael Lopez, an Assistant Professor of Statistics at Skidmore and author of the fascinating blog StatsbyLopez, wrote about this in a compelling recent article comparing draft performance across the major American sports. And he found that the NBA has actually had the best talent evaluation of any of the leagues:4

So, yes, talent evaluators make plenty of mistakes — but that doesn’t mean it’s complete chaos. We may not know everything about the draft. But we do know something.

So let’s keep going with the analogy of drafting out of a hat. I’d challenge Gladwell: if you don’t think there’s any predictability to talent evaluation then let’s do a test. You can put all the names in the draft into a hat and pick them out randomly. I will do the same, except with one change: I will use two hats. In the first I’ll place the names of the players who are generally thought of as the most likely top picks: Markelle Fultz, Lonzo Ball, and Josh Jackson. And in the second hat I’ll place the rest of the names. I’ll draw out of Hat #1 for my first three picks and then Hat #2 for the rest of my picks. In five years we’ll look back and see whose was a more accurate ordering of future NBA success. I’d bet a fair amount of money that the Two Hat System will perform far better than the One Hat System.

Of course that’s not really fair. Later in the discussion with Simmons, Gladwell conceded that we do a fair job of evaluating the top players. “We can identify the three players who we think are reasonably good, can’t miss prospects,” he said. But he felt like once you get deeper, to the bottom of the first round for example, then it becomes almost complete luck.

Except, as we saw earlier, this too isn’t correct. So I’ll revise my Hat Draft Challenge. In keeping with his comments, Gladwell can use my newly patented Two Hat System. Meanwhile, I’ll split all the names up into a series of hats based on what we know about the players: the scouting consensus, their college statistical production, their age, their measurables (height, wingspan, etc.). There won’t even have to be a large number of hats – I would just loosely divide them into a few rough categories. And, once again, I would bet that when we look back in five years it wouldn’t even be a contest. The performance of the Many Hat System would blow away that of the Gladwellian Two Hat System.

I’m joking about this challenge, of course. But I do think this is a useful framework to think about drafting: loosely grouping players into buckets based on what we know about them, while at the same time being willing to acknowledge all that we don’t know. A lot of draft discussion (both inside and outside teams) is filled with enormous conviction, with precise orderings, with opinions presented as facts. The culture of the NBA and the media is such that expressing thoughts that match the actual uncertainty involved in the decision is discouraged. If you say: “Here’s what I think, but I’m not really sure” you’re accused of dancing around the question and not taking a stand.

So we make big claims, get into heated debates, and become convinced that we can see the future more accurately than anyone else. And sure, it’s fun — but it’s not right.

Instead let’s approach the draft from the other direction. Let’s not start with what we know, but what we don’t know. Let’s keep in mind the bigger historical picture: the hits and misses, the huge surprises, the mistakes made. Let’s discuss our opinions with the proper amount of intellectual humility. As the Nobel Prize-winning physicist Richard Feynman said: “I think that when we know that we actually do live in uncertainty, then we ought to admit it; it is of great value to realize that we do not know the answers to different questions.”

But let’s also remember that we do know something. Simmons and Gladwell concluded their discussion about drafting with the following exchange:

Simmons: “You know, there’s no real science to it—”

Gladwell: “Well that’s my point.”

But there is. It’s the science of decision making under uncertainty, and it’s what the draft is all about. Which I’ll address next week, in Part 2. Stay tuned.

- I chose 2012 as the cutoff point since it’s at least far enough away that we have a decent sense of what those players turned into. ↩

- And of course it’s extremely difficult to tell whether this is the case — even for the decision makers themselves and even in retrospect. Often there are no clear answers as to why a pick worked or didn’t. ↩

- Kudos to Myers for having the humility and courage to admit publicly what many executives won’t: the role of luck in many decisions. ↩

- For reasons he touches on and which I’ll address more at a later point. ↩